Transforms#

Generic Interfaces#

Transform#

- class monai.transforms.Transform[source]#

An abstract class of a

Transform. A transform is callable that processesdata.It could be stateful and may modify

datain place, the implementation should be aware of:thread safety when mutating its own states. When used from a multi-process context, transform’s instance variables are read-only. thread-unsafe transforms should inherit

monai.transforms.ThreadUnsafe.datacontent unused by this transform may still be used in the subsequent transforms in a composed transform.storing too much information in

datamay cause some memory issue or IPC sync issue, especially in the multi-processing environment of PyTorch DataLoader.

See Also

- abstract __call__(data)[source]#

datais an element which often comes from an iteration over an iterable, such astorch.utils.data.Dataset. This method should return an updated version ofdata. To simplify the input validations, most of the transforms assume thatdatais a Numpy ndarray, PyTorch Tensor or string,the data shape can be:

string data without shape, LoadImage transform expects file paths,

most of the pre-/post-processing transforms expect:

(num_channels, spatial_dim_1[, spatial_dim_2, ...]), except for example: AddChannel expects (spatial_dim_1[, spatial_dim_2, …])

the channel dimension is often not omitted even if number of channels is one.

This method can optionally take additional arguments to help execute transformation operation.

- Raises:

NotImplementedError – When the subclass does not override this method.

MapTransform#

- class monai.transforms.MapTransform(keys, allow_missing_keys=False)[source]#

A subclass of

monai.transforms.Transformwith an assumption that thedatainput ofself.__call__is a MutableMapping such asdict.The

keysparameter will be used to get and set the actual data item to transform. That is, the callable of this transform should follow the pattern:def __call__(self, data): for key in self.keys: if key in data: # update output data with some_transform_function(data[key]). else: # raise exception unless allow_missing_keys==True. return data

- Raises:

ValueError – When

keysis an empty iterable.TypeError – When

keystype is not inUnion[Hashable, Iterable[Hashable]].

- abstract __call__(data)[source]#

dataoften comes from an iteration over an iterable, such astorch.utils.data.Dataset.To simplify the input validations, this method assumes:

datais a Python dictionary,data[key]is a Numpy ndarray, PyTorch Tensor or string, wherekeyis an element ofself.keys, the data shape can be:string data without shape, LoadImaged transform expects file paths,

most of the pre-/post-processing transforms expect:

(num_channels, spatial_dim_1[, spatial_dim_2, ...]), except for example: AddChanneld expects (spatial_dim_1[, spatial_dim_2, …])

the channel dimension is often not omitted even if number of channels is one.

- Raises:

NotImplementedError – When the subclass does not override this method.

- Returns:

An updated dictionary version of

databy applying the transform.

- call_update(data)[source]#

This function is to be called after every self.__call__(data), update data[key_transforms] and data[key_meta_dict] using the content from MetaTensor data[key], for MetaTensor backward compatibility 0.9.0.

- first_key(data)[source]#

Get the first available key of self.keys in the input data dictionary. If no available key, return an empty tuple ().

- Parameters:

data (

dict[Hashable,Any]) – data that the transform will be applied to.

- key_iterator(data, *extra_iterables)[source]#

Iterate across keys and optionally extra iterables. If key is missing, exception is raised if allow_missing_keys==False (default). If allow_missing_keys==True, key is skipped.

- Parameters:

data – data that the transform will be applied to

extra_iterables – anything else to be iterated through

RandomizableTrait#

- class monai.transforms.RandomizableTrait[source]#

An interface to indicate that the transform has the capability to perform randomized transforms to the data that it is called upon. This interface can be extended from by people adapting transforms to the MONAI framework as well as by implementors of MONAI transforms.

LazyTrait#

- class monai.transforms.LazyTrait[source]#

An interface to indicate that the transform has the capability to execute using MONAI’s lazy resampling feature. In order to do this, the implementing class needs to be able to describe its operation as an affine matrix or grid with accompanying metadata. This interface can be extended from by people adapting transforms to the MONAI framework as well as by implementors of MONAI transforms.

- property lazy#

Get whether lazy evaluation is enabled for this transform instance. :returns: True if the transform is operating in a lazy fashion, False if not.

- property requires_current_data#

Get whether the transform requires the input data to be up to date before the transform executes. Such transforms can still execute lazily by adding pending operations to the output tensors. :returns: True if the transform requires its inputs to be up to date and False if it does not

MultiSampleTrait#

- class monai.transforms.MultiSampleTrait[source]#

An interface to indicate that the transform has the capability to return multiple samples given an input, such as when performing random crops of a sample. This interface can be extended from by people adapting transforms to the MONAI framework as well as by implementors of MONAI transforms.

Randomizable#

- class monai.transforms.Randomizable[source]#

An interface for handling random state locally, currently based on a class variable R, which is an instance of np.random.RandomState. This provides the flexibility of component-specific determinism without affecting the global states. It is recommended to use this API with

monai.data.DataLoaderfor deterministic behaviour of the preprocessing pipelines. This API is not thread-safe. Additionally, deepcopying instance of this class often causes insufficient randomness as the random states will be duplicated.- randomize(data)[source]#

Within this method,

self.Rshould be used, instead of np.random, to introduce random factors.all

self.Rcalls happen here so that we have a better chance to identify errors of sync the random state.This method can generate the random factors based on properties of the input data.

- Raises:

NotImplementedError – When the subclass does not override this method.

- Return type:

None

- set_random_state(seed=None, state=None)[source]#

Set the random state locally, to control the randomness, the derived classes should use

self.Rinstead of np.random to introduce random factors.- Parameters:

seed – set the random state with an integer seed.

state – set the random state with a np.random.RandomState object.

- Raises:

TypeError – When

stateis not anOptional[np.random.RandomState].- Returns:

a Randomizable instance.

LazyTransform#

- class monai.transforms.LazyTransform(lazy=False)[source]#

An implementation of functionality for lazy transforms that can be subclassed by array and dictionary transforms to simplify implementation of new lazy transforms.

- property lazy#

Get whether lazy evaluation is enabled for this transform instance. :returns: True if the transform is operating in a lazy fashion, False if not.

- property requires_current_data#

Get whether the transform requires the input data to be up to date before the transform executes. Such transforms can still execute lazily by adding pending operations to the output tensors. :returns: True if the transform requires its inputs to be up to date and False if it does not

RandomizableTransform#

- class monai.transforms.RandomizableTransform(prob=1.0, do_transform=True)[source]#

An interface for handling random state locally, currently based on a class variable R, which is an instance of np.random.RandomState. This class introduces a randomized flag _do_transform, is mainly for randomized data augmentation transforms. For example:

from monai.transforms import RandomizableTransform class RandShiftIntensity100(RandomizableTransform): def randomize(self): super().randomize(None) self._offset = self.R.uniform(low=0, high=100) def __call__(self, img): self.randomize() if not self._do_transform: return img return img + self._offset transform = RandShiftIntensity() transform.set_random_state(seed=0) print(transform(10))

- randomize(data)[source]#

Within this method,

self.Rshould be used, instead of np.random, to introduce random factors.all

self.Rcalls happen here so that we have a better chance to identify errors of sync the random state.This method can generate the random factors based on properties of the input data.

- Return type:

None

Compose#

- class monai.transforms.Compose(transforms=None, map_items=True, unpack_items=False, log_stats=False, lazy=False, overrides=None)[source]#

Composeprovides the ability to chain a series of callables together in a sequential manner. Each transform in the sequence must take a single argument and return a single value.Composecan be used in two ways:With a series of transforms that accept and return a single ndarray / tensor / tensor-like parameter.

With a series of transforms that accept and return a dictionary that contains one or more parameters. Such transforms must have pass-through semantics that unused values in the dictionary must be copied to the return dictionary. It is required that the dictionary is copied between input and output of each transform.

If some transform takes a data item dictionary as input, and returns a sequence of data items in the transform chain, all following transforms will be applied to each item of this list if map_items is True (the default). If map_items is False, the returned sequence is passed whole to the next callable in the chain.

For example:

A Compose([transformA, transformB, transformC], map_items=True)(data_dict) could achieve the following patch-based transformation on the data_dict input:

transformA normalizes the intensity of ‘img’ field in the data_dict.

transformB crops out image patches from the ‘img’ and ‘seg’ of data_dict, and return a list of three patch samples:

{'img': 3x100x100 data, 'seg': 1x100x100 data, 'shape': (100, 100)} applying transformB ----------> [{'img': 3x20x20 data, 'seg': 1x20x20 data, 'shape': (20, 20)}, {'img': 3x20x20 data, 'seg': 1x20x20 data, 'shape': (20, 20)}, {'img': 3x20x20 data, 'seg': 1x20x20 data, 'shape': (20, 20)},]

transformC then randomly rotates or flips ‘img’ and ‘seg’ of each dictionary item in the list returned by transformB.

The composed transforms will be set the same global random seed if user called set_determinism().

When using the pass-through dictionary operation, you can make use of

monai.transforms.adaptors.adaptorto wrap transforms that don’t conform to the requirements. This approach allows you to use transforms from otherwise incompatible libraries with minimal additional work.Note

In many cases, Compose is not the best way to create pre-processing pipelines. Pre-processing is often not a strictly sequential series of operations, and much of the complexity arises when a not-sequential set of functions must be called as if it were a sequence.

Example: images and labels Images typically require some kind of normalization that labels do not. Both are then typically augmented through the use of random rotations, flips, and deformations. Compose can be used with a series of transforms that take a dictionary that contains ‘image’ and ‘label’ entries. This might require wrapping torchvision transforms before passing them to compose. Alternatively, one can create a class with a __call__ function that calls your pre-processing functions taking into account that not all of them are called on the labels.

Lazy resampling:

Lazy resampling is an experimental feature introduced in 1.2. Its purpose is to reduce the number of resample operations that must be carried out when executing a pipeline of transforms. This can provide significant performance improvements in terms of pipeline executing speed and memory usage, and can also significantly reduce the loss of information that occurs when performing a number of spatial resamples in succession.

Lazy resampling can be enabled or disabled through the

lazyparameter, either by specifying it at initialisation time or overriding it at call time.False (default): Don’t perform any lazy resampling

None: Perform lazy resampling based on the ‘lazy’ properties of the transform instances.

True: Always perform lazy resampling if possible. This will ignore the

lazyproperties of the transform instances

Please see the Lazy Resampling topic for more details of this feature and examples of its use.

- Parameters:

transforms – sequence of callables.

map_items – whether to apply transform to each item in the input data if data is a list or tuple. defaults to True.

unpack_items – whether to unpack input data with * as parameters for the callable function of transform. defaults to False.

log_stats – this optional parameter allows you to specify a logger by name for logging of pipeline execution. Setting this to False disables logging. Setting it to True enables logging to the default loggers. Setting a string overrides the logger name to which logging is performed.

lazy – whether to enable Lazy Resampling for lazy transforms. If False, transforms will be carried out on a transform by transform basis. If True, all lazy transforms will be executed by accumulating changes and resampling as few times as possible. If lazy is None, Compose will perform lazy execution on lazy transforms that have their lazy property set to True.

overrides – this optional parameter allows you to specify a dictionary of parameters that should be overridden when executing a pipeline. These each parameter that is compatible with a given transform is then applied to that transform before it is executed. Note that overrides are currently only applied when Lazy Resampling is enabled for the pipeline or a given transform. If lazy is False they are ignored. Currently supported args are: {

"mode","padding_mode","dtype","align_corners","resample_mode",device}.

- flatten()[source]#

Return a Composition with a simple list of transforms, as opposed to any nested Compositions.

e.g., t1 = Compose([x, x, x, x, Compose([Compose([x, x]), x, x])]).flatten() will result in the equivalent of t1 = Compose([x, x, x, x, x, x, x, x]).

- get_index_of_first(predicate)[source]#

get_index_of_first takes a

predicateand returns the index of the first transform that satisfies the predicate (ie. makes the predicate return True). If it is unable to find a transform that satisfies thepredicate, it returns None.Example

c = Compose([Flip(…), Rotate90(…), Zoom(…), RandRotate(…), Resize(…)])

print(c.get_index_of_first(lambda t: isinstance(t, RandomTrait))) >>> 3 print(c.get_index_of_first(lambda t: isinstance(t, Compose))) >>> None

Note

This is only performed on the transforms directly held by this instance. If this instance has nested

Composetransforms or other transforms that contain transforms, it does not iterate into them.- Parameters:

predicate – a callable that takes a single argument and returns a bool. When called

compose (it is passed a transform from the sequence of transforms contained by this)

instance.

- Returns:

The index of the first transform in the sequence for which

predicatereturns True. None if no transform satisfies thepredicate

- inverse(data)[source]#

Inverse of

__call__.- Raises:

NotImplementedError – When the subclass does not override this method.

- property lazy#

Get whether lazy evaluation is enabled for this transform instance. :returns: True if the transform is operating in a lazy fashion, False if not.

- randomize(data=None)[source]#

Within this method,

self.Rshould be used, instead of np.random, to introduce random factors.all

self.Rcalls happen here so that we have a better chance to identify errors of sync the random state.This method can generate the random factors based on properties of the input data.

- Raises:

NotImplementedError – When the subclass does not override this method.

- set_random_state(seed=None, state=None)[source]#

Set the random state locally, to control the randomness, the derived classes should use

self.Rinstead of np.random to introduce random factors.- Parameters:

seed – set the random state with an integer seed.

state – set the random state with a np.random.RandomState object.

- Raises:

TypeError – When

stateis not anOptional[np.random.RandomState].- Returns:

a Randomizable instance.

InvertibleTransform#

- class monai.transforms.InvertibleTransform[source]#

Classes for invertible transforms.

This class exists so that an

invertmethod can be implemented. This allows, for example, images to be cropped, rotated, padded, etc., during training and inference, and after be returned to their original size before saving to file for comparison in an external viewer.When the

inversemethod is called:the inverse is called on each key individually, which allows for different parameters being passed to each label (e.g., different interpolation for image and label).

the inverse transforms are applied in a last-in-first-out order. As the inverse is applied, its entry is removed from the list detailing the applied transformations. That is to say that during the forward pass, the list of applied transforms grows, and then during the inverse it shrinks back down to an empty list.

We currently check that the

id()of the transform is the same in the forward and inverse directions. This is a useful check to ensure that the inverses are being processed in the correct order.Note to developers: When converting a transform to an invertible transform, you need to:

Inherit from this class.

In

__call__, add a call topush_transform.Any extra information that might be needed for the inverse can be included with the dictionary

extra_info. This dictionary should have the same keys regardless of whetherdo_transformwas True or False and can only contain objects that are accepted in pytorch data loader’s collate function (e.g., None is not allowed).Implement an

inversemethod. Make sure that after performing the inverse,pop_transformis called.

TraceableTransform#

- class monai.transforms.TraceableTransform[source]#

Maintains a stack of applied transforms to data.

- Data can be one of two types:

A MetaTensor (this is the preferred data type).

- A dictionary of data containing arrays/tensors and auxiliary metadata. In

this case, a key must be supplied (this dictionary-based approach is deprecated).

If data is of type MetaTensor, then the applied transform will be added to

data.applied_operations.- If data is a dictionary, then one of two things can happen:

If data[key] is a MetaTensor, the applied transform will be added to

data[key].applied_operations.- Else, the applied transform will be appended to an adjacent list using

trace_key. If, for example, the key is image, then the transform will be appended to image_transforms (this dictionary-based approach is deprecated).

- Hopefully it is clear that there are three total possibilities:

data is MetaTensor

data is dictionary, data[key] is MetaTensor

data is dictionary, data[key] is not MetaTensor (this is a deprecated approach).

The

__call__method of this transform class must be implemented so that the transformation information is stored during the data transformation.The information in the stack of applied transforms must be compatible with the default collate, by only storing strings, numbers and arrays.

tracing could be enabled by self.set_tracing or setting MONAI_TRACE_TRANSFORM when initializing the class.

- get_most_recent_transform(data, key=None, check=True, pop=False)[source]#

Get most recent transform for the stack.

- Parameters:

data – dictionary of data or MetaTensor.

key (

Optional[Hashable]) – if data is a dictionary, data[key] will be modified.check (

bool) – if true, check that self is the same type as the most recently-applied transform.pop (

bool) – if true, remove the transform as it is returned.

- Returns:

Dictionary of most recently applied transform

- Raises:

- RuntimeError – data is neither MetaTensor nor dictionary

- get_transform_info()[source]#

Return a dictionary with the relevant information pertaining to an applied transform.

- Return type:

dict

- pop_transform(data, key=None, check=True)[source]#

Return and pop the most recent transform.

- Parameters:

data – dictionary of data or MetaTensor

key (

Optional[Hashable]) – if data is a dictionary, data[key] will be modifiedcheck (

bool) – if true, check that self is the same type as the most recently-applied transform.

- Returns:

Dictionary of most recently applied transform

- Raises:

- RuntimeError – data is neither MetaTensor nor dictionary

- push_transform(data, *args, **kwargs)[source]#

Push to a stack of applied transforms of

data.- Parameters:

data – dictionary of data or MetaTensor.

args – additional positional arguments to track_transform_meta.

kwargs – additional keyword arguments to track_transform_meta, set

replace=True(default False) to rewrite the last transform infor in applied_operation/pending_operation based onself.get_transform_info().

- trace_transform(to_trace)[source]#

Temporarily set the tracing status of a transform with a context manager.

- classmethod track_transform_meta(data, key=None, sp_size=None, affine=None, extra_info=None, orig_size=None, transform_info=None, lazy=False)[source]#

Update a stack of applied/pending transforms metadata of

data.- Parameters:

data – dictionary of data or MetaTensor.

key – if data is a dictionary, data[key] will be modified.

sp_size – the expected output spatial size when the transform is applied. it can be tensor or numpy, but will be converted to a list of integers.

affine – the affine representation of the (spatial) transform in the image space. When the transform is applied, meta_tensor.affine will be updated to

meta_tensor.affine @ affine.extra_info – if desired, any extra information pertaining to the applied transform can be stored in this dictionary. These are often needed for computing the inverse transformation.

orig_size – sometimes during the inverse it is useful to know what the size of the original image was, in which case it can be supplied here.

transform_info – info from self.get_transform_info().

lazy – whether to push the transform to pending_operations or applied_operations.

- Returns:

For backward compatibility, if

datais a dictionary, it returns the dictionary with updateddata[key]. Otherwise, this function returns a MetaObj with updated transform metadata.

BatchInverseTransform#

- class monai.transforms.BatchInverseTransform(transform, loader, collate_fn=<function no_collation>, num_workers=0, detach=True, pad_batch=True, fill_value=None)[source]#

Perform inverse on a batch of data. This is useful if you have inferred a batch of images and want to invert them all.

- __init__(transform, loader, collate_fn=<function no_collation>, num_workers=0, detach=True, pad_batch=True, fill_value=None)[source]#

- Parameters:

transform – a callable data transform on input data.

loader – data loader used to run transforms and generate the batch of data.

collate_fn – how to collate data after inverse transformations. default won’t do any collation, so the output will be a list of size batch size.

num_workers – number of workers when run data loader for inverse transforms, default to 0 as only run 1 iteration and multi-processing may be even slower. if the transforms are really slow, set num_workers for multi-processing. if set to None, use the num_workers of the transform data loader.

detach – whether to detach the tensors. Scalars tensors will be detached into number types instead of torch tensors.

pad_batch – when the items in a batch indicate different batch size, whether to pad all the sequences to the longest. If False, the batch size will be the length of the shortest sequence.

fill_value – the value to fill the padded sequences when pad_batch=True.

Decollated#

- class monai.transforms.Decollated(keys=None, detach=True, pad_batch=True, fill_value=None, allow_missing_keys=False)[source]#

Decollate a batch of data. If input is a dictionary, it also supports to only decollate specified keys. Note that unlike most MapTransforms, it will delete the other keys that are not specified. if keys=None, it will decollate all the data in the input. It replicates the scalar values to every item of the decollated list.

- Parameters:

keys – keys of the corresponding items to decollate, note that it will delete other keys not specified. if None, will decollate all the keys. see also:

monai.transforms.compose.MapTransform.detach – whether to detach the tensors. Scalars tensors will be detached into number types instead of torch tensors.

pad_batch – when the items in a batch indicate different batch size, whether to pad all the sequences to the longest. If False, the batch size will be the length of the shortest sequence.

fill_value – the value to fill the padded sequences when pad_batch=True.

allow_missing_keys – don’t raise exception if key is missing.

OneOf#

- class monai.transforms.OneOf(transforms=None, weights=None, map_items=True, unpack_items=False, log_stats=False, lazy=False, overrides=None)[source]#

OneOfprovides the ability to randomly choose one transform out of a list of callables with pre-defined probabilities for each.- Parameters:

transforms – sequence of callables.

weights – probabilities corresponding to each callable in transforms. Probabilities are normalized to sum to one.

map_items – whether to apply transform to each item in the input data if data is a list or tuple. defaults to True.

unpack_items – whether to unpack input data with * as parameters for the callable function of transform. defaults to False.

log_stats – this optional parameter allows you to specify a logger by name for logging of pipeline execution. Setting this to False disables logging. Setting it to True enables logging to the default loggers. Setting a string overrides the logger name to which logging is performed.

lazy – whether to enable Lazy Resampling for lazy transforms. If False, transforms will be carried out on a transform by transform basis. If True, all lazy transforms will be executed by accumulating changes and resampling as few times as possible. If lazy is None, Compose will perform lazy execution on lazy transforms that have their lazy property set to True.

overrides – this optional parameter allows you to specify a dictionary of parameters that should be overridden when executing a pipeline. These each parameter that is compatible with a given transform is then applied to that transform before it is executed. Note that overrides are currently only applied when Lazy Resampling is enabled for the pipeline or a given transform. If lazy is False they are ignored. Currently supported args are: {

"mode","padding_mode","dtype","align_corners","resample_mode",device}.

RandomOrder#

- class monai.transforms.RandomOrder(transforms=None, map_items=True, unpack_items=False, log_stats=False, lazy=False, overrides=None)[source]#

RandomOrderprovides the ability to apply a list of transformations in random order.- Parameters:

transforms – sequence of callables.

map_items – whether to apply transform to each item in the input data if data is a list or tuple. defaults to True.

unpack_items – whether to unpack input data with * as parameters for the callable function of transform. defaults to False.

log_stats – this optional parameter allows you to specify a logger by name for logging of pipeline execution. Setting this to False disables logging. Setting it to True enables logging to the default loggers. Setting a string overrides the logger name to which logging is performed.

lazy – whether to enable Lazy Resampling for lazy transforms. If False, transforms will be carried out on a transform by transform basis. If True, all lazy transforms will be executed by accumulating changes and resampling as few times as possible. If lazy is None, Compose will perform lazy execution on lazy transforms that have their lazy property set to True.

overrides – this optional parameter allows you to specify a dictionary of parameters that should be overridden when executing a pipeline. These each parameter that is compatible with a given transform is then applied to that transform before it is executed. Note that overrides are currently only applied when Lazy Resampling is enabled for the pipeline or a given transform. If lazy is False they are ignored. Currently supported args are: {

"mode","padding_mode","dtype","align_corners","resample_mode",device}.

SomeOf#

- class monai.transforms.SomeOf(transforms=None, map_items=True, unpack_items=False, log_stats=False, num_transforms=None, replace=False, weights=None, lazy=False, overrides=None)[source]#

SomeOfsamples a different sequence of transforms to apply each time it is called.It can be configured to sample a fixed or varying number of transforms each time its called. Samples are drawn uniformly, or from user supplied transform weights. When varying the number of transforms sampled per call, the number of transforms to sample that call is sampled uniformly from a range supplied by the user.

- Parameters:

transforms – list of callables.

map_items – whether to apply transform to each item in the input data if data is a list or tuple. Defaults to True.

unpack_items – whether to unpack input data with * as parameters for the callable function of transform. Defaults to False.

log_stats – this optional parameter allows you to specify a logger by name for logging of pipeline execution. Setting this to False disables logging. Setting it to True enables logging to the default loggers. Setting a string overrides the logger name to which logging is performed.

num_transforms – a 2-tuple, int, or None. The 2-tuple specifies the minimum and maximum (inclusive) number of transforms to sample at each iteration. If an int is given, the lower and upper bounds are set equal. None sets it to len(transforms). Default to None.

replace – whether to sample with replacement. Defaults to False.

weights – weights to use in for sampling transforms. Will be normalized to 1. Default: None (uniform).

lazy – whether to enable Lazy Resampling for lazy transforms. If False, transforms will be carried out on a transform by transform basis. If True, all lazy transforms will be executed by accumulating changes and resampling as few times as possible. If lazy is None, Compose will perform lazy execution on lazy transforms that have their lazy property set to True.

overrides – this optional parameter allows you to specify a dictionary of parameters that should be overridden when executing a pipeline. These each parameter that is compatible with a given transform is then applied to that transform before it is executed. Note that overrides are currently only applied when Lazy Resampling is enabled for the pipeline or a given transform. If lazy is False they are ignored. Currently supported args are: {

"mode","padding_mode","dtype","align_corners","resample_mode",device}.

Functionals#

Crop and Pad (functional)#

A collection of “functional” transforms for spatial operations.

- monai.transforms.croppad.functional.crop_func(img, slices, lazy, transform_info)[source]#

Functional implementation of cropping a MetaTensor. This function operates eagerly or lazily according to

lazy(defaultFalse).- Parameters:

img (

Tensor) – data to be transformed, assuming img is channel-first and cropping doesn’t apply to the channel dim.slices (

tuple[slice, …]) – the crop slices computed based on specified center & size or start & end or slices.lazy (

bool) – a flag indicating whether the operation should be performed in a lazy fashion or not.transform_info (

dict) – a dictionary with the relevant information pertaining to an applied transform.

- Return type:

Tensor

- monai.transforms.croppad.functional.crop_or_pad_nd(img, translation_mat, spatial_size, mode, **kwargs)[source]#

Crop or pad using the translation matrix and spatial size. The translation coefficients are rounded to the nearest integers. For a more generic implementation, please see

monai.transforms.SpatialResample.- Parameters:

img (

Tensor) – data to be transformed, assuming img is channel-first and padding doesn’t apply to the channel dim.translation_mat – the translation matrix to be applied to the image. A translation matrix generated by, for example,

monai.transforms.utils.create_translate(). The translation coefficients are rounded to the nearest integers.spatial_size (

tuple[int, …]) – the spatial size of the output image.mode (

str) – the padding mode.kwargs – other arguments for the np.pad or torch.pad function.

- monai.transforms.croppad.functional.pad_func(img, to_pad, transform_info, mode=constant, lazy=False, **kwargs)[source]#

Functional implementation of padding a MetaTensor. This function operates eagerly or lazily according to

lazy(defaultFalse).torch.nn.functional.pad is used unless the mode or kwargs are not available in torch, in which case np.pad will be used.

- Parameters:

img (

Tensor) – data to be transformed, assuming img is channel-first and padding doesn’t apply to the channel dim.to_pad (

tuple[tuple[int,int]]) – the amount to be padded in each dimension [(low_H, high_H), (low_W, high_W), …]. note that it including channel dimension.transform_info (

dict) – a dictionary with the relevant information pertaining to an applied transform.mode (

str) – available modes: (Numpy) {"constant","edge","linear_ramp","maximum","mean","median","minimum","reflect","symmetric","wrap","empty"} (PyTorch) {"constant","reflect","replicate","circular"}. One of the listed string values or a user supplied function. Defaults to"constant". See also: https://numpy.org/doc/stable/reference/generated/numpy.pad.html https://pytorch.org/docs/stable/generated/torch.nn.functional.pad.htmllazy (

bool) – a flag indicating whether the operation should be performed in a lazy fashion or not.transform_info – a dictionary with the relevant information pertaining to an applied transform.

kwargs – other arguments for the np.pad or torch.pad function. note that np.pad treats channel dimension as the first dimension.

- Return type:

Tensor

- monai.transforms.croppad.functional.pad_nd(img, to_pad, mode=constant, **kwargs)[source]#

Pad img for a given an amount of padding in each dimension.

torch.nn.functional.pad is used unless the mode or kwargs are not available in torch, in which case np.pad will be used.

- Parameters:

img (~NdarrayTensor) – data to be transformed, assuming img is channel-first and padding doesn’t apply to the channel dim.

to_pad (

list[tuple[int,int]]) – the amount to be padded in each dimension [(low_H, high_H), (low_W, high_W), …]. default to self.to_pad.mode (

str) – available modes: (Numpy) {"constant","edge","linear_ramp","maximum","mean","median","minimum","reflect","symmetric","wrap","empty"} (PyTorch) {"constant","reflect","replicate","circular"}. One of the listed string values or a user supplied function. Defaults to"constant". See also: https://numpy.org/doc/stable/reference/generated/numpy.pad.html https://pytorch.org/docs/stable/generated/torch.nn.functional.pad.htmlkwargs – other arguments for the np.pad or torch.pad function. note that np.pad treats channel dimension as the first dimension.

- Return type:

~NdarrayTensor

Spatial (functional)#

A collection of “functional” transforms for spatial operations.

- monai.transforms.spatial.functional.affine_func(img, affine, grid, resampler, sp_size, mode, padding_mode, do_resampling, image_only, lazy, transform_info)[source]#

Functional implementation of affine. This function operates eagerly or lazily according to

lazy(defaultFalse).- Parameters:

img – data to be changed, assuming img is channel-first.

affine – the affine transformation to be applied, it can be a 3x3 or 4x4 matrix. This should be defined for the voxel space spatial centers (

float(size - 1)/2).grid – used in non-lazy mode to pre-compute the grid to do the resampling.

resampler – the resampler function, see also:

monai.transforms.Resample.sp_size – output image spatial size.

mode – {

"bilinear","nearest"} or spline interpolation order 0-5 (integers). Interpolation mode to calculate output values. See also: https://pytorch.org/docs/stable/generated/torch.nn.functional.grid_sample.html When it’s an integer, the numpy (cpu tensor)/cupy (cuda tensor) backends will be used and the value represents the order of the spline interpolation. See also: https://docs.scipy.org/doc/scipy/reference/generated/scipy.ndimage.map_coordinates.htmlpadding_mode – {

"zeros","border","reflection"} Padding mode for outside grid values. See also: https://pytorch.org/docs/stable/generated/torch.nn.functional.grid_sample.html When mode is an integer, using numpy/cupy backends, this argument accepts {‘reflect’, ‘grid-mirror’, ‘constant’, ‘grid-constant’, ‘nearest’, ‘mirror’, ‘grid-wrap’, ‘wrap’}. See also: https://docs.scipy.org/doc/scipy/reference/generated/scipy.ndimage.map_coordinates.htmldo_resampling – whether to do the resampling, this is a flag for the use case of updating metadata but skipping the actual (potentially heavy) resampling operation.

image_only – if True return only the image volume, otherwise return (image, affine).

lazy – a flag that indicates whether the operation should be performed lazily or not

transform_info – a dictionary with the relevant information pertaining to an applied transform.

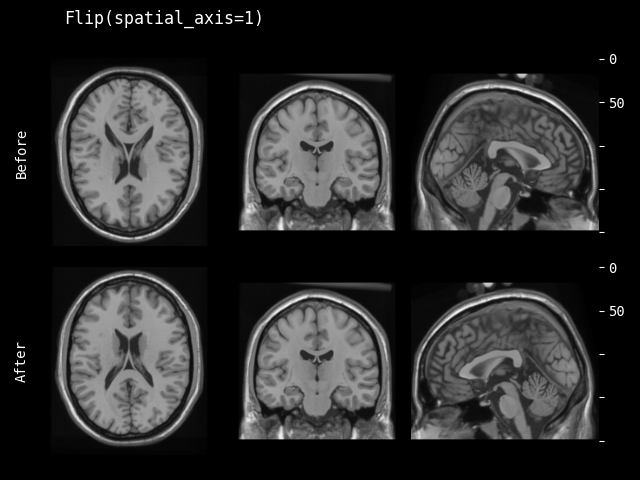

- monai.transforms.spatial.functional.flip(img, sp_axes, lazy, transform_info)[source]#

Functional implementation of flip. This function operates eagerly or lazily according to

lazy(defaultFalse).- Parameters:

img – data to be changed, assuming img is channel-first.

sp_axes – spatial axes along which to flip over. If None, will flip over all of the axes of the input array. If axis is negative it counts from the last to the first axis. If axis is a tuple of ints, flipping is performed on all of the axes specified in the tuple.

lazy – a flag that indicates whether the operation should be performed lazily or not

transform_info – a dictionary with the relevant information pertaining to an applied transform.

- monai.transforms.spatial.functional.orientation(img, original_affine, spatial_ornt, lazy, transform_info)[source]#

Functional implementation of changing the input image’s orientation into the specified based on spatial_ornt. This function operates eagerly or lazily according to

lazy(defaultFalse).- Parameters:

img – data to be changed, assuming img is channel-first.

original_affine – original affine of the input image.

spatial_ornt – orientations of the spatial axes, see also https://nipy.org/nibabel/reference/nibabel.orientations.html

lazy – a flag that indicates whether the operation should be performed lazily or not

transform_info – a dictionary with the relevant information pertaining to an applied transform.

- Return type:

Tensor

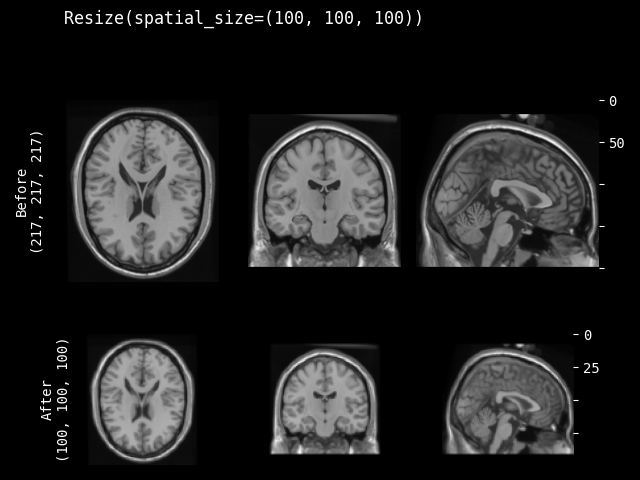

- monai.transforms.spatial.functional.resize(img, out_size, mode, align_corners, dtype, input_ndim, anti_aliasing, anti_aliasing_sigma, lazy, transform_info)[source]#

Functional implementation of resize. This function operates eagerly or lazily according to

lazy(defaultFalse).- Parameters:

img – data to be changed, assuming img is channel-first.

out_size – expected shape of spatial dimensions after resize operation.

mode – {

"nearest","nearest-exact","linear","bilinear","bicubic","trilinear","area"} The interpolation mode. See also: https://pytorch.org/docs/stable/generated/torch.nn.functional.interpolate.htmlalign_corners – This only has an effect when mode is ‘linear’, ‘bilinear’, ‘bicubic’ or ‘trilinear’.

dtype – data type for resampling computation. If None, use the data type of input data.

input_ndim – number of spatial dimensions.

anti_aliasing – whether to apply a Gaussian filter to smooth the image prior to downsampling. It is crucial to filter when downsampling the image to avoid aliasing artifacts. See also

skimage.transform.resizeanti_aliasing_sigma – {float, tuple of floats}, optional Standard deviation for Gaussian filtering used when anti-aliasing.

lazy – a flag that indicates whether the operation should be performed lazily or not

transform_info – a dictionary with the relevant information pertaining to an applied transform.

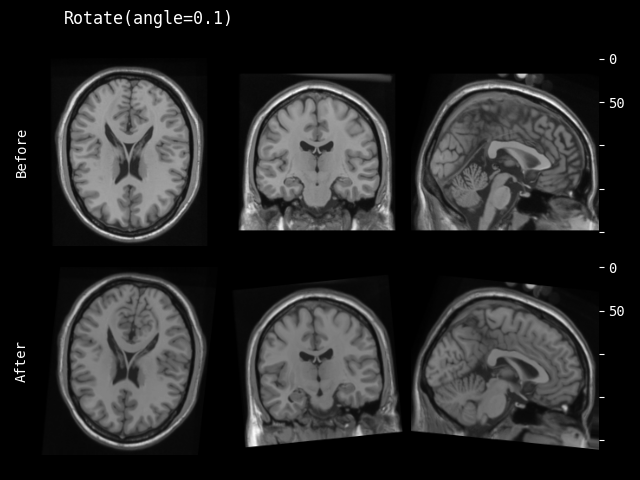

- monai.transforms.spatial.functional.rotate(img, angle, output_shape, mode, padding_mode, align_corners, dtype, lazy, transform_info)[source]#

Functional implementation of rotate. This function operates eagerly or lazily according to

lazy(defaultFalse).- Parameters:

img – data to be changed, assuming img is channel-first.

angle – Rotation angle(s) in radians. should a float for 2D, three floats for 3D.

output_shape – output shape of the rotated data.

mode – {

"bilinear","nearest"} Interpolation mode to calculate output values. See also: https://pytorch.org/docs/stable/generated/torch.nn.functional.grid_sample.htmlpadding_mode – {

"zeros","border","reflection"} Padding mode for outside grid values. See also: https://pytorch.org/docs/stable/generated/torch.nn.functional.grid_sample.htmlalign_corners – See also: https://pytorch.org/docs/stable/generated/torch.nn.functional.grid_sample.html

dtype – data type for resampling computation. If None, use the data type of input data. To be compatible with other modules, the output data type is always

float32.lazy – a flag that indicates whether the operation should be performed lazily or not

transform_info – a dictionary with the relevant information pertaining to an applied transform.

- monai.transforms.spatial.functional.rotate90(img, axes, k, lazy, transform_info)[source]#

Functional implementation of rotate90. This function operates eagerly or lazily according to

lazy(defaultFalse).- Parameters:

img – data to be changed, assuming img is channel-first.

axes – 2 int numbers, defines the plane to rotate with 2 spatial axes. If axis is negative it counts from the last to the first axis.

k – number of times to rotate by 90 degrees.

lazy – a flag that indicates whether the operation should be performed lazily or not

transform_info – a dictionary with the relevant information pertaining to an applied transform.

- monai.transforms.spatial.functional.spatial_resample(img, dst_affine, spatial_size, mode, padding_mode, align_corners, dtype_pt, lazy, transform_info)[source]#

Functional implementation of resampling the input image to the specified

dst_affinematrix andspatial_size. This function operates eagerly or lazily according tolazy(defaultFalse).- Parameters:

img – data to be resampled, assuming img is channel-first.

dst_affine – target affine matrix, if None, use the input affine matrix, effectively no resampling.

spatial_size – output spatial size, if the component is

-1, use the corresponding input spatial size.mode – {

"bilinear","nearest"} or spline interpolation order 0-5 (integers). Interpolation mode to calculate output values. See also: https://pytorch.org/docs/stable/generated/torch.nn.functional.grid_sample.html When it’s an integer, the numpy (cpu tensor)/cupy (cuda tensor) backends will be used and the value represents the order of the spline interpolation. See also: https://docs.scipy.org/doc/scipy/reference/generated/scipy.ndimage.map_coordinates.htmlpadding_mode – {

"zeros","border","reflection"} Padding mode for outside grid values. See also: https://pytorch.org/docs/stable/generated/torch.nn.functional.grid_sample.html When mode is an integer, using numpy/cupy backends, this argument accepts {‘reflect’, ‘grid-mirror’, ‘constant’, ‘grid-constant’, ‘nearest’, ‘mirror’, ‘grid-wrap’, ‘wrap’}. See also: https://docs.scipy.org/doc/scipy/reference/generated/scipy.ndimage.map_coordinates.htmlalign_corners – Geometrically, we consider the pixels of the input as squares rather than points. See also: https://pytorch.org/docs/stable/generated/torch.nn.functional.grid_sample.html

dtype_pt – data dtype for resampling computation.

lazy – a flag that indicates whether the operation should be performed lazily or not

transform_info – a dictionary with the relevant information pertaining to an applied transform.

- Return type:

Tensor

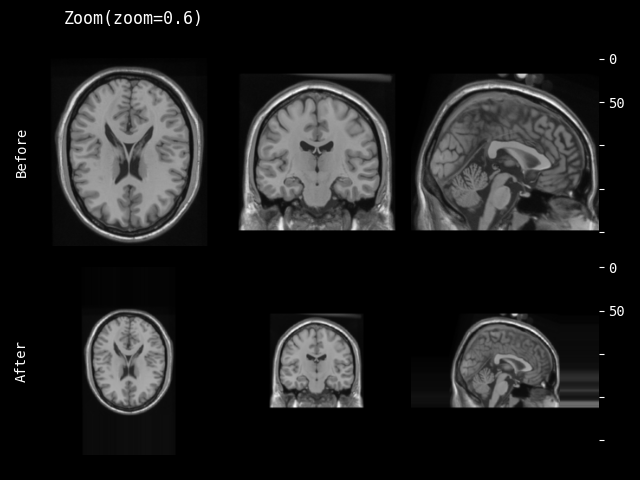

- monai.transforms.spatial.functional.zoom(img, scale_factor, keep_size, mode, padding_mode, align_corners, dtype, lazy, transform_info)[source]#

Functional implementation of zoom. This function operates eagerly or lazily according to

lazy(defaultFalse).- Parameters:

img – data to be changed, assuming img is channel-first.

scale_factor – The zoom factor along the spatial axes. If a float, zoom is the same for each spatial axis. If a sequence, zoom should contain one value for each spatial axis.

keep_size – Whether keep original size (padding/slicing if needed).

mode – {

"bilinear","nearest"} Interpolation mode to calculate output values. See also: https://pytorch.org/docs/stable/generated/torch.nn.functional.grid_sample.htmlpadding_mode – {

"zeros","border","reflection"} Padding mode for outside grid values. See also: https://pytorch.org/docs/stable/generated/torch.nn.functional.grid_sample.htmlalign_corners – See also: https://pytorch.org/docs/stable/generated/torch.nn.functional.grid_sample.html

dtype – data type for resampling computation. If None, use the data type of input data. To be compatible with other modules, the output data type is always

float32.lazy – a flag that indicates whether the operation should be performed lazily or not

transform_info – a dictionary with the relevant information pertaining to an applied transform.

Vanilla Transforms#

Crop and Pad#

PadListDataCollate#

- class monai.transforms.PadListDataCollate(method=symmetric, mode=constant, **kwargs)[source]#

Same as MONAI’s

list_data_collate, except any tensors are centrally padded to match the shape of the biggest tensor in each dimension. This transform is useful if some of the applied transforms generate batch data of different sizes.This can be used on both list and dictionary data. Note that in the case of the dictionary data, it may add the transform information to the list of invertible transforms if input batch have different spatial shape, so need to call static method: inverse before inverting other transforms.

Note that normally, a user won’t explicitly use the __call__ method. Rather this would be passed to the DataLoader. This means that __call__ handles data as it comes out of a DataLoader, containing batch dimension. However, the inverse operates on dictionaries containing images of shape C,H,W,[D]. This asymmetry is necessary so that we can pass the inverse through multiprocessing.

- Parameters:

method (

str) – padding method (seemonai.transforms.SpatialPad)mode (

str) – padding mode (seemonai.transforms.SpatialPad)kwargs – other arguments for the np.pad or torch.pad function. note that np.pad treats channel dimension as the first dimension.

Pad#

- class monai.transforms.Pad(to_pad=None, mode=constant, lazy=False, **kwargs)[source]#

Perform padding for a given an amount of padding in each dimension.

torch.nn.functional.pad is used unless the mode or kwargs are not available in torch, in which case np.pad will be used.

This transform is capable of lazy execution. See the Lazy Resampling topic for more information.

- Parameters:

to_pad – the amount to pad in each dimension (including the channel) [(low_H, high_H), (low_W, high_W), …]. if None, must provide in the __call__ at runtime.

mode – available modes: (Numpy) {

"constant","edge","linear_ramp","maximum","mean","median","minimum","reflect","symmetric","wrap","empty"} (PyTorch) {"constant","reflect","replicate","circular"}. One of the listed string values or a user supplied function. Defaults to"constant". See also: https://numpy.org/doc/1.18/reference/generated/numpy.pad.html https://pytorch.org/docs/stable/generated/torch.nn.functional.pad.html requires pytorch >= 1.10 for best compatibility.lazy – a flag to indicate whether this transform should execute lazily or not. Defaults to False.

kwargs – other arguments for the np.pad or torch.pad function. note that np.pad treats channel dimension as the first dimension.

- __call__(img, to_pad=None, mode=None, lazy=None, **kwargs)[source]#

- Parameters:

img – data to be transformed, assuming img is channel-first and padding doesn’t apply to the channel dim.

to_pad – the amount to be padded in each dimension [(low_H, high_H), (low_W, high_W), …]. default to self.to_pad.

mode – available modes: (Numpy) {

"constant","edge","linear_ramp","maximum","mean","median","minimum","reflect","symmetric","wrap","empty"} (PyTorch) {"constant","reflect","replicate","circular"}. One of the listed string values or a user supplied function. Defaults to"constant". See also: https://numpy.org/doc/1.18/reference/generated/numpy.pad.html https://pytorch.org/docs/stable/generated/torch.nn.functional.pad.htmllazy – a flag to override the lazy behaviour for this call, if set. Defaults to None.

kwargs – other arguments for the np.pad or torch.pad function. note that np.pad treats channel dimension as the first dimension.

- compute_pad_width(spatial_shape)[source]#

dynamically compute the pad width according to the spatial shape. the output is the amount of padding for all dimensions including the channel.

- Parameters:

spatial_shape (

Sequence[int]) – spatial shape of the original image.- Return type:

tuple[tuple[int,int]]

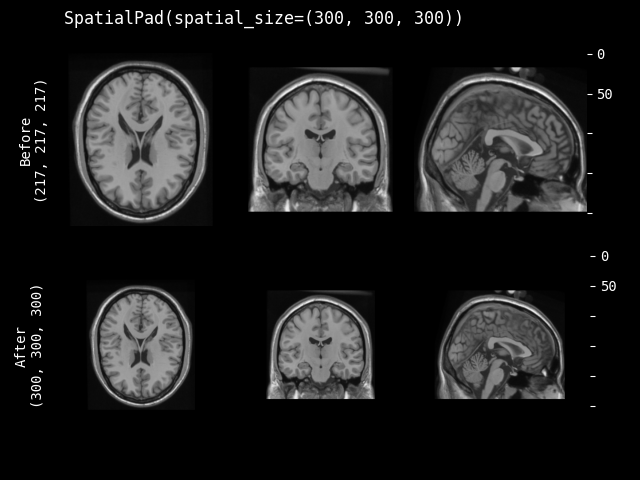

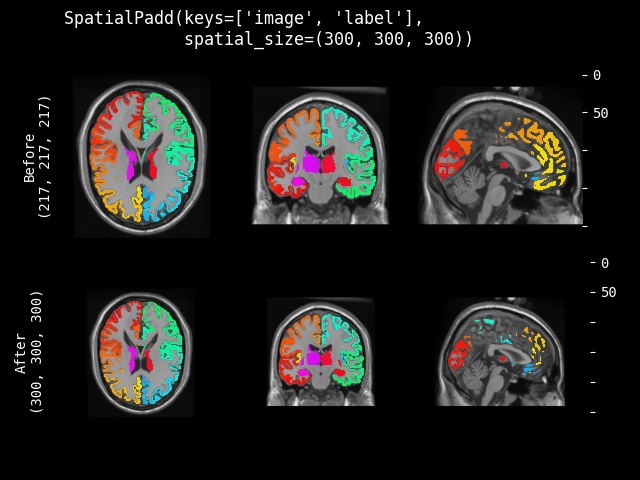

SpatialPad#

- class monai.transforms.SpatialPad(spatial_size, method=symmetric, mode=constant, lazy=False, **kwargs)[source]#

Performs padding to the data, symmetric for all sides or all on one side for each dimension.

This transform is capable of lazy execution. See the Lazy Resampling topic for more information.

- Parameters:

spatial_size – the spatial size of output data after padding, if a dimension of the input data size is larger than the pad size, will not pad that dimension. If its components have non-positive values, the corresponding size of input image will be used (no padding). for example: if the spatial size of input data is [30, 30, 30] and spatial_size=[32, 25, -1], the spatial size of output data will be [32, 30, 30].

method – {

"symmetric","end"} Pad image symmetrically on every side or only pad at the end sides. Defaults to"symmetric".mode – available modes for numpy array:{

"constant","edge","linear_ramp","maximum","mean","median","minimum","reflect","symmetric","wrap","empty"} available modes for PyTorch Tensor: {"constant","reflect","replicate","circular"}. One of the listed string values or a user supplied function. Defaults to"constant". See also: https://numpy.org/doc/1.18/reference/generated/numpy.pad.html https://pytorch.org/docs/stable/generated/torch.nn.functional.pad.htmllazy – a flag to indicate whether this transform should execute lazily or not. Defaults to False.

kwargs – other arguments for the np.pad or torch.pad function. note that np.pad treats channel dimension as the first dimension.

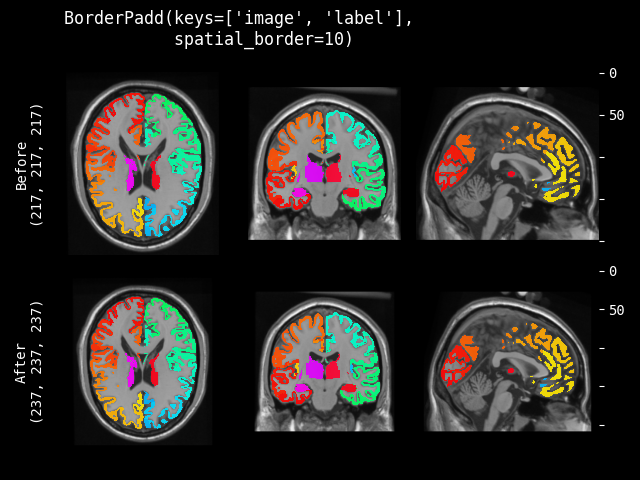

BorderPad#

- class monai.transforms.BorderPad(spatial_border, mode=constant, lazy=False, **kwargs)[source]#

Pad the input data by adding specified borders to every dimension.

This transform is capable of lazy execution. See the Lazy Resampling topic for more information.

- Parameters:

spatial_border –

specified size for every spatial border. Any -ve values will be set to 0. It can be 3 shapes:

single int number, pad all the borders with the same size.

length equals the length of image shape, pad every spatial dimension separately. for example, image shape(CHW) is [1, 4, 4], spatial_border is [2, 1], pad every border of H dim with 2, pad every border of W dim with 1, result shape is [1, 8, 6].

length equals 2 x (length of image shape), pad every border of every dimension separately. for example, image shape(CHW) is [1, 4, 4], spatial_border is [1, 2, 3, 4], pad top of H dim with 1, pad bottom of H dim with 2, pad left of W dim with 3, pad right of W dim with 4. the result shape is [1, 7, 11].

mode – available modes for numpy array:{

"constant","edge","linear_ramp","maximum","mean","median","minimum","reflect","symmetric","wrap","empty"} available modes for PyTorch Tensor: {"constant","reflect","replicate","circular"}. One of the listed string values or a user supplied function. Defaults to"constant". See also: https://numpy.org/doc/1.18/reference/generated/numpy.pad.html https://pytorch.org/docs/stable/generated/torch.nn.functional.pad.htmllazy – a flag to indicate whether this transform should execute lazily or not. Defaults to False.

kwargs – other arguments for the np.pad or torch.pad function. note that np.pad treats channel dimension as the first dimension.

- compute_pad_width(spatial_shape)[source]#

dynamically compute the pad width according to the spatial shape. the output is the amount of padding for all dimensions including the channel.

- Parameters:

spatial_shape (

Sequence[int]) – spatial shape of the original image.- Return type:

tuple[tuple[int,int]]

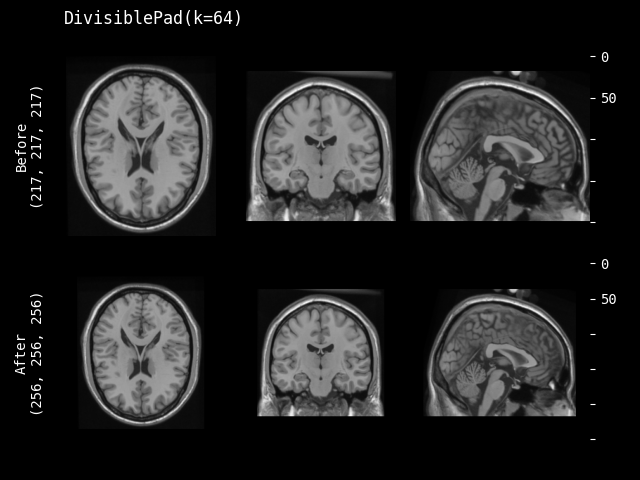

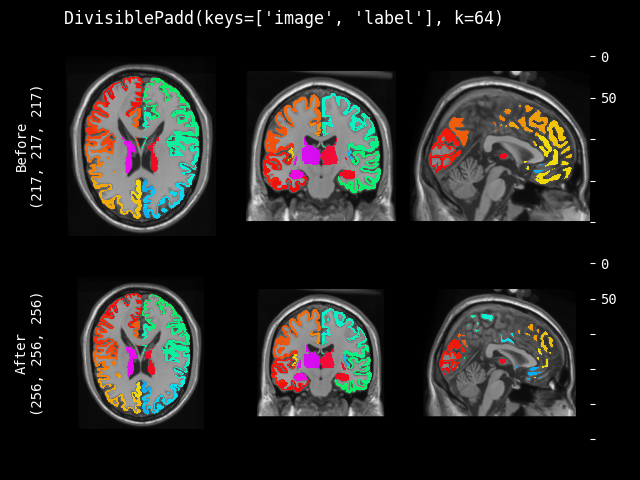

DivisiblePad#

- class monai.transforms.DivisiblePad(k, mode=constant, method=symmetric, lazy=False, **kwargs)[source]#

Pad the input data, so that the spatial sizes are divisible by k.

This transform is capable of lazy execution. See the Lazy Resampling topic for more information.

- __init__(k, mode=constant, method=symmetric, lazy=False, **kwargs)[source]#

- Parameters:

k – the target k for each spatial dimension. if k is negative or 0, the original size is preserved. if k is an int, the same k be applied to all the input spatial dimensions.

mode – available modes for numpy array:{

"constant","edge","linear_ramp","maximum","mean","median","minimum","reflect","symmetric","wrap","empty"} available modes for PyTorch Tensor: {"constant","reflect","replicate","circular"}. One of the listed string values or a user supplied function. Defaults to"constant". See also: https://numpy.org/doc/1.18/reference/generated/numpy.pad.html https://pytorch.org/docs/stable/generated/torch.nn.functional.pad.htmlmethod – {

"symmetric","end"} Pad image symmetrically on every side or only pad at the end sides. Defaults to"symmetric".lazy – a flag to indicate whether this transform should execute lazily or not. Defaults to False.

kwargs – other arguments for the np.pad or torch.pad function. note that np.pad treats channel dimension as the first dimension.

See also

monai.transforms.SpatialPad

- compute_pad_width(spatial_shape)[source]#

dynamically compute the pad width according to the spatial shape. the output is the amount of padding for all dimensions including the channel.

- Parameters:

spatial_shape (

Sequence[int]) – spatial shape of the original image.- Return type:

tuple[tuple[int,int]]

Crop#

- class monai.transforms.Crop(lazy=False)[source]#

Perform crop operations on the input image.

This transform is capable of lazy execution. See the Lazy Resampling topic for more information.

- Parameters:

lazy (

bool) – a flag to indicate whether this transform should execute lazily or not. Defaults to False.

- __call__(img, slices, lazy=None)[source]#

Apply the transform to img, assuming img is channel-first and slicing doesn’t apply to the channel dim.

- static compute_slices(roi_center=None, roi_size=None, roi_start=None, roi_end=None, roi_slices=None)[source]#

Compute the crop slices based on specified center & size or start & end or slices.

- Parameters:

roi_center – voxel coordinates for center of the crop ROI.

roi_size – size of the crop ROI, if a dimension of ROI size is larger than image size, will not crop that dimension of the image.

roi_start – voxel coordinates for start of the crop ROI.

roi_end – voxel coordinates for end of the crop ROI, if a coordinate is out of image, use the end coordinate of image.

roi_slices – list of slices for each of the spatial dimensions.

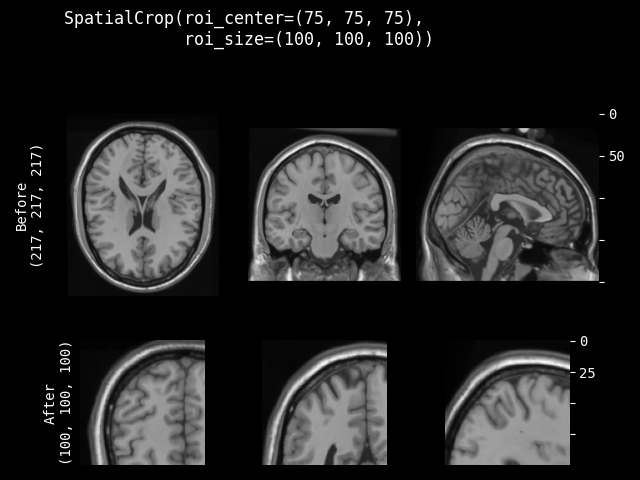

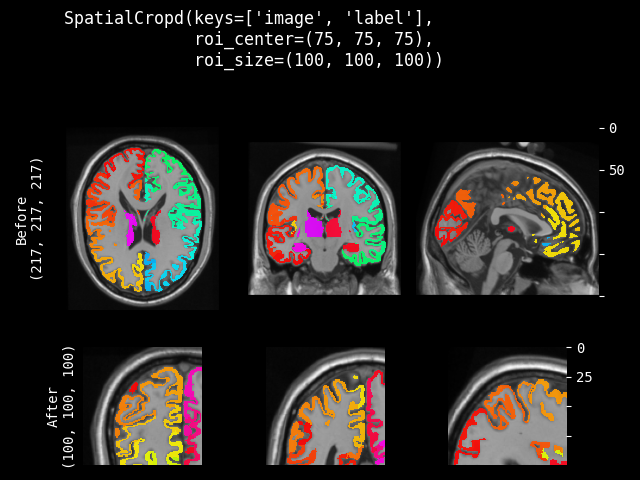

SpatialCrop#

- class monai.transforms.SpatialCrop(roi_center=None, roi_size=None, roi_start=None, roi_end=None, roi_slices=None, lazy=False)[source]#

General purpose cropper to produce sub-volume region of interest (ROI). If a dimension of the expected ROI size is larger than the input image size, will not crop that dimension. So the cropped result may be smaller than the expected ROI, and the cropped results of several images may not have exactly the same shape. It can support to crop ND spatial (channel-first) data.

- The cropped region can be parameterised in various ways:

a list of slices for each spatial dimension (allows for use of negative indexing and None)

a spatial center and size

the start and end coordinates of the ROI

This transform is capable of lazy execution. See the Lazy Resampling topic for more information.

- __call__(img, lazy=None)[source]#

Apply the transform to img, assuming img is channel-first and slicing doesn’t apply to the channel dim.

- __init__(roi_center=None, roi_size=None, roi_start=None, roi_end=None, roi_slices=None, lazy=False)[source]#

- Parameters:

roi_center – voxel coordinates for center of the crop ROI.

roi_size – size of the crop ROI, if a dimension of ROI size is larger than image size, will not crop that dimension of the image.

roi_start – voxel coordinates for start of the crop ROI.

roi_end – voxel coordinates for end of the crop ROI, if a coordinate is out of image, use the end coordinate of image.

roi_slices – list of slices for each of the spatial dimensions.

lazy – a flag to indicate whether this transform should execute lazily or not. Defaults to False.

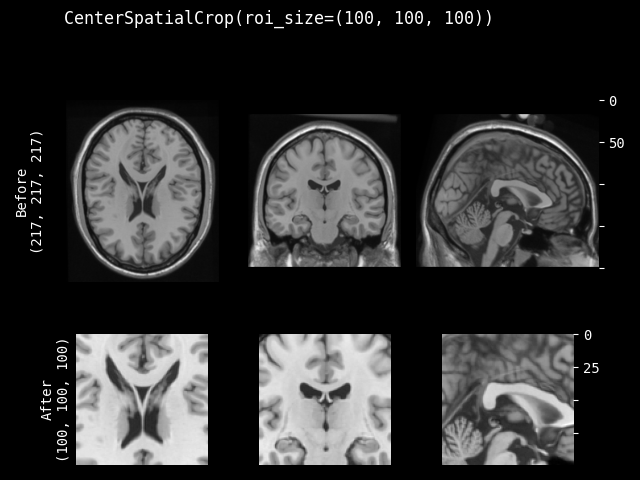

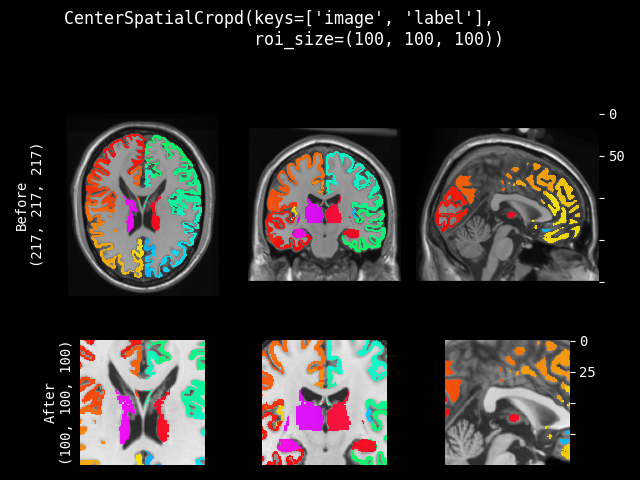

CenterSpatialCrop#

- class monai.transforms.CenterSpatialCrop(roi_size, lazy=False)[source]#

Crop at the center of image with specified ROI size. If a dimension of the expected ROI size is larger than the input image size, will not crop that dimension. So the cropped result may be smaller than the expected ROI, and the cropped results of several images may not have exactly the same shape.

This transform is capable of lazy execution. See the Lazy Resampling topic for more information.

- Parameters:

roi_size – the spatial size of the crop region e.g. [224,224,128] if a dimension of ROI size is larger than image size, will not crop that dimension of the image. If its components have non-positive values, the corresponding size of input image will be used. for example: if the spatial size of input data is [40, 40, 40] and roi_size=[32, 64, -1], the spatial size of output data will be [32, 40, 40].

lazy – a flag to indicate whether this transform should execute lazily or not. Defaults to False.

- __call__(img, lazy=None)[source]#

Apply the transform to img, assuming img is channel-first and slicing doesn’t apply to the channel dim.

- compute_slices(spatial_size)[source]#

Compute the crop slices based on specified center & size or start & end or slices.

- Parameters:

roi_center – voxel coordinates for center of the crop ROI.

roi_size – size of the crop ROI, if a dimension of ROI size is larger than image size, will not crop that dimension of the image.

roi_start – voxel coordinates for start of the crop ROI.

roi_end – voxel coordinates for end of the crop ROI, if a coordinate is out of image, use the end coordinate of image.

roi_slices – list of slices for each of the spatial dimensions.

- Return type:

tuple[slice]

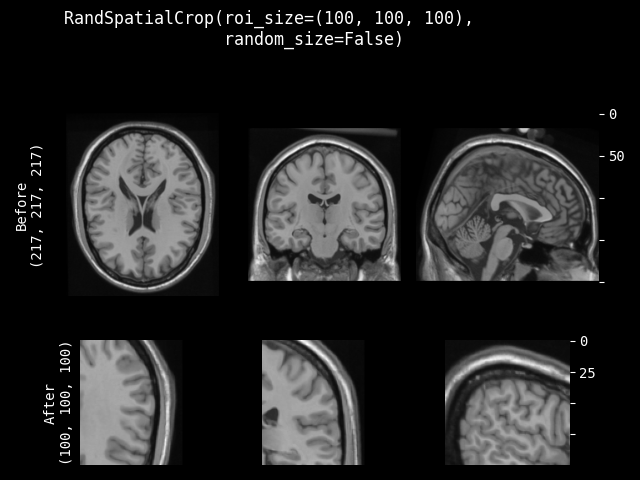

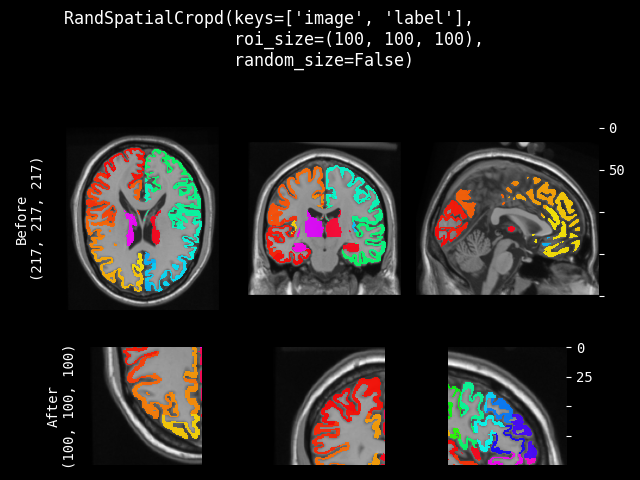

RandSpatialCrop#

- class monai.transforms.RandSpatialCrop(roi_size, max_roi_size=None, random_center=True, random_size=False, lazy=False)[source]#

Crop image with random size or specific size ROI. It can crop at a random position as center or at the image center. And allows to set the minimum and maximum size to limit the randomly generated ROI.

Note: even random_size=False, if a dimension of the expected ROI size is larger than the input image size, will not crop that dimension. So the cropped result may be smaller than the expected ROI, and the cropped results of several images may not have exactly the same shape.

This transform is capable of lazy execution. See the Lazy Resampling topic for more information.

- Parameters:

roi_size – if random_size is True, it specifies the minimum crop region. if random_size is False, it specifies the expected ROI size to crop. e.g. [224, 224, 128] if a dimension of ROI size is larger than image size, will not crop that dimension of the image. If its components have non-positive values, the corresponding size of input image will be used. for example: if the spatial size of input data is [40, 40, 40] and roi_size=[32, 64, -1], the spatial size of output data will be [32, 40, 40].

max_roi_size – if random_size is True and roi_size specifies the min crop region size, max_roi_size can specify the max crop region size. if None, defaults to the input image size. if its components have non-positive values, the corresponding size of input image will be used.

random_center – crop at random position as center or the image center.

random_size – crop with random size or specific size ROI. if True, the actual size is sampled from randint(roi_size, max_roi_size + 1).

lazy – a flag to indicate whether this transform should execute lazily or not. Defaults to False.

- __call__(img, randomize=True, lazy=None)[source]#

Apply the transform to img, assuming img is channel-first and slicing doesn’t apply to the channel dim.

- randomize(img_size)[source]#

Within this method,

self.Rshould be used, instead of np.random, to introduce random factors.all

self.Rcalls happen here so that we have a better chance to identify errors of sync the random state.This method can generate the random factors based on properties of the input data.

- Raises:

NotImplementedError – When the subclass does not override this method.

- Return type:

None

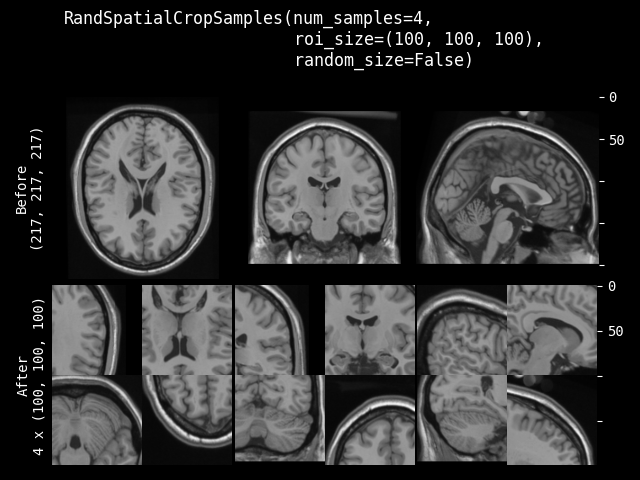

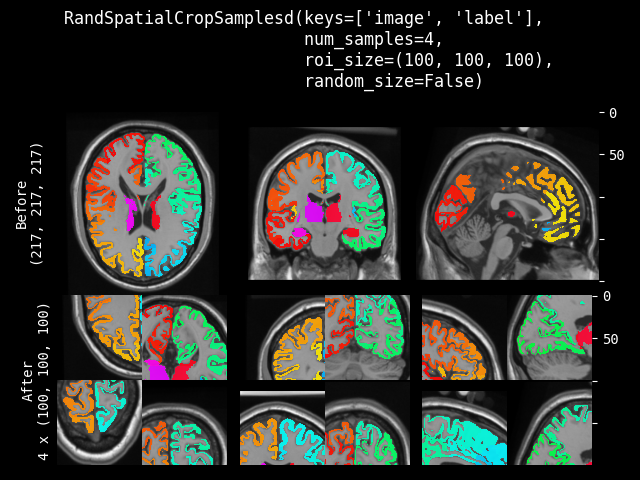

RandSpatialCropSamples#

- class monai.transforms.RandSpatialCropSamples(roi_size, num_samples, max_roi_size=None, random_center=True, random_size=False, lazy=False)[source]#

Crop image with random size or specific size ROI to generate a list of N samples. It can crop at a random position as center or at the image center. And allows to set the minimum size to limit the randomly generated ROI. It will return a list of cropped images.

Note: even random_size=False, if a dimension of the expected ROI size is larger than the input image size, will not crop that dimension. So the cropped result may be smaller than the expected ROI, and the cropped results of several images may not have exactly the same shape.

This transform is capable of lazy execution. See the Lazy Resampling topic for more information.

- Parameters:

roi_size – if random_size is True, it specifies the minimum crop region. if random_size is False, it specifies the expected ROI size to crop. e.g. [224, 224, 128] if a dimension of ROI size is larger than image size, will not crop that dimension of the image. If its components have non-positive values, the corresponding size of input image will be used. for example: if the spatial size of input data is [40, 40, 40] and roi_size=[32, 64, -1], the spatial size of output data will be [32, 40, 40].

num_samples – number of samples (crop regions) to take in the returned list.

max_roi_size – if random_size is True and roi_size specifies the min crop region size, max_roi_size can specify the max crop region size. if None, defaults to the input image size. if its components have non-positive values, the corresponding size of input image will be used.

random_center – crop at random position as center or the image center.

random_size – crop with random size or specific size ROI. The actual size is sampled from randint(roi_size, img_size).

lazy – a flag to indicate whether this transform should execute lazily or not. Defaults to False.

- Raises:

ValueError – When

num_samplesis nonpositive.

- __call__(img, lazy=None)[source]#

Apply the transform to img, assuming img is channel-first and cropping doesn’t change the channel dim.

- property lazy#

Get whether lazy evaluation is enabled for this transform instance. :returns: True if the transform is operating in a lazy fashion, False if not.

- randomize(data=None)[source]#

Within this method,

self.Rshould be used, instead of np.random, to introduce random factors.all

self.Rcalls happen here so that we have a better chance to identify errors of sync the random state.This method can generate the random factors based on properties of the input data.

- Raises:

NotImplementedError – When the subclass does not override this method.

- set_random_state(seed=None, state=None)[source]#

Set the random state locally, to control the randomness, the derived classes should use

self.Rinstead of np.random to introduce random factors.- Parameters:

seed – set the random state with an integer seed.

state – set the random state with a np.random.RandomState object.

- Raises:

TypeError – When

stateis not anOptional[np.random.RandomState].- Returns:

a Randomizable instance.

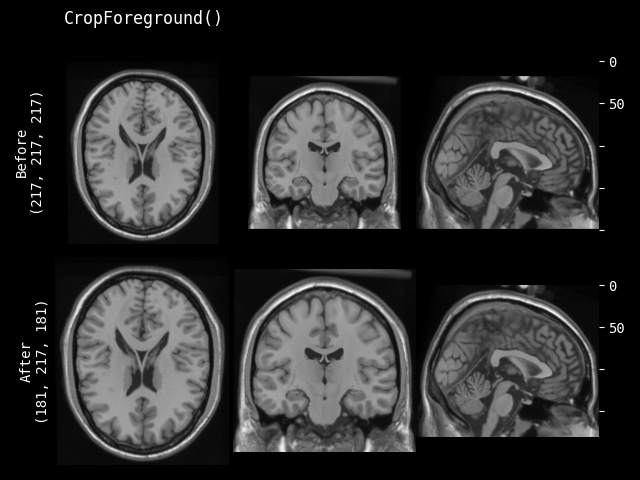

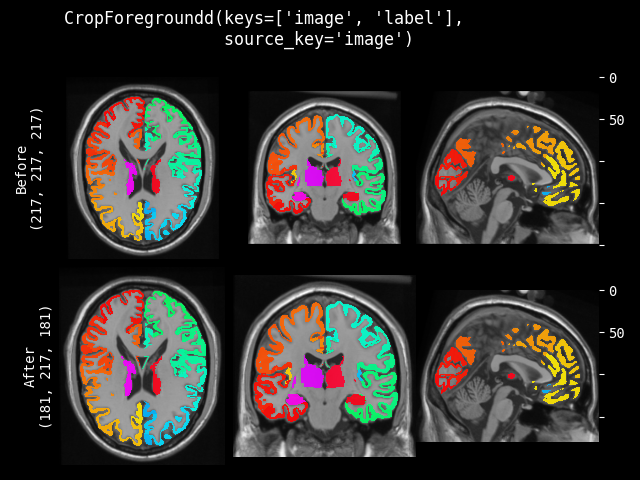

CropForeground#

- class monai.transforms.CropForeground(select_fn=<function is_positive>, channel_indices=None, margin=0, allow_smaller=True, return_coords=False, k_divisible=1, mode=constant, lazy=False, **pad_kwargs)[source]#

Crop an image using a bounding box. The bounding box is generated by selecting foreground using select_fn at channels channel_indices. margin is added in each spatial dimension of the bounding box. The typical usage is to help training and evaluation if the valid part is small in the whole medical image. Users can define arbitrary function to select expected foreground from the whole image or specified channels. And it can also add margin to every dim of the bounding box of foreground object. For example:

image = np.array( [[[0, 0, 0, 0, 0], [0, 1, 2, 1, 0], [0, 1, 3, 2, 0], [0, 1, 2, 1, 0], [0, 0, 0, 0, 0]]]) # 1x5x5, single channel 5x5 image def threshold_at_one(x): # threshold at 1 return x > 1 cropper = CropForeground(select_fn=threshold_at_one, margin=0) print(cropper(image)) [[[2, 1], [3, 2], [2, 1]]]

This transform is capable of lazy execution. See the Lazy Resampling topic for more information.

- __call__(img, mode=None, lazy=None, **pad_kwargs)[source]#

Apply the transform to img, assuming img is channel-first and slicing doesn’t change the channel dim.

- __init__(select_fn=<function is_positive>, channel_indices=None, margin=0, allow_smaller=True, return_coords=False, k_divisible=1, mode=constant, lazy=False, **pad_kwargs)[source]#

- Parameters:

select_fn – function to select expected foreground, default is to select values > 0.

channel_indices – if defined, select foreground only on the specified channels of image. if None, select foreground on the whole image.

margin – add margin value to spatial dims of the bounding box, if only 1 value provided, use it for all dims.

allow_smaller – when computing box size with margin, whether to allow the image edges to be smaller than the final box edges. If False, part of a padded output box might be outside of the original image, if True, the image edges will be used as the box edges. Default to True.

return_coords – whether return the coordinates of spatial bounding box for foreground.

k_divisible – make each spatial dimension to be divisible by k, default to 1. if k_divisible is an int, the same k be applied to all the input spatial dimensions.

mode – available modes for numpy array:{

"constant","edge","linear_ramp","maximum","mean","median","minimum","reflect","symmetric","wrap","empty"} available modes for PyTorch Tensor: {"constant","reflect","replicate","circular"}. One of the listed string values or a user supplied function. Defaults to"constant". See also: https://numpy.org/doc/1.18/reference/generated/numpy.pad.html https://pytorch.org/docs/stable/generated/torch.nn.functional.pad.htmllazy – a flag to indicate whether this transform should execute lazily or not. Defaults to False.

pad_kwargs – other arguments for the np.pad or torch.pad function. note that np.pad treats channel dimension as the first dimension.

- compute_bounding_box(img)[source]#

Compute the start points and end points of bounding box to crop. And adjust bounding box coords to be divisible by k.

- Return type:

tuple[ndarray,ndarray]

- crop_pad(img, box_start, box_end, mode=None, lazy=False, **pad_kwargs)[source]#

Crop and pad based on the bounding box.

- inverse(img)[source]#

Inverse of

__call__.- Raises:

NotImplementedError – When the subclass does not override this method.

- Return type:

- property lazy#

Get whether lazy evaluation is enabled for this transform instance. :returns: True if the transform is operating in a lazy fashion, False if not.

- property requires_current_data#

Get whether the transform requires the input data to be up to date before the transform executes. Such transforms can still execute lazily by adding pending operations to the output tensors. :returns: True if the transform requires its inputs to be up to date and False if it does not

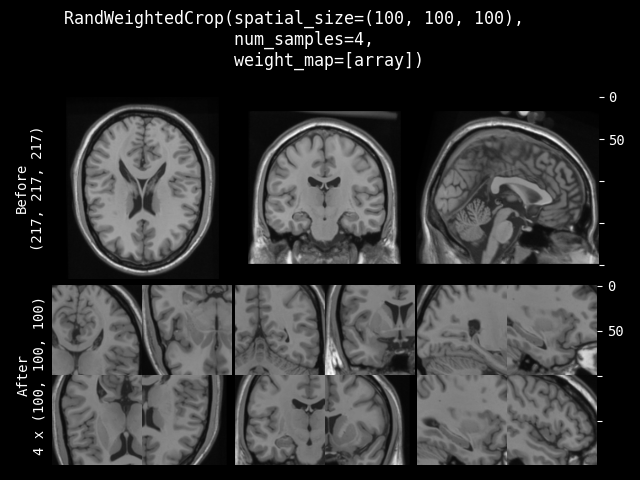

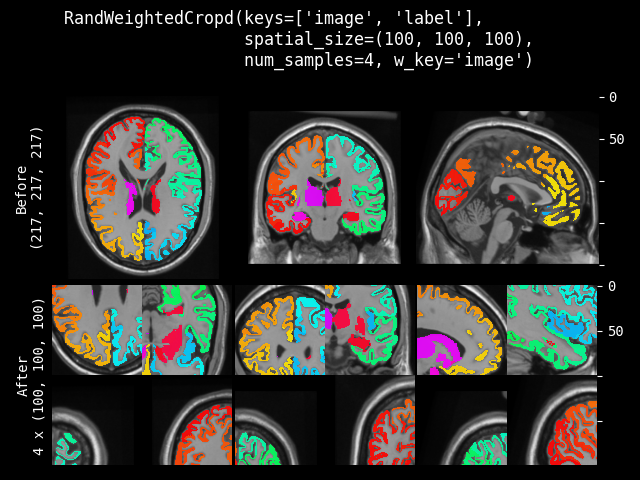

RandWeightedCrop#

- class monai.transforms.RandWeightedCrop(spatial_size, num_samples=1, weight_map=None, lazy=False)[source]#

Samples a list of num_samples image patches according to the provided weight_map.

This transform is capable of lazy execution. See the Lazy Resampling topic for more information.

- Parameters:

spatial_size – the spatial size of the image patch e.g. [224, 224, 128]. If its components have non-positive values, the corresponding size of img will be used.

num_samples – number of samples (image patches) to take in the returned list.

weight_map – weight map used to generate patch samples. The weights must be non-negative. Each element denotes a sampling weight of the spatial location. 0 indicates no sampling. It should be a single-channel array in shape, for example, (1, spatial_dim_0, spatial_dim_1, …).

lazy – a flag to indicate whether this transform should execute lazily or not. Defaults to False.

- __call__(img, weight_map=None, randomize=True, lazy=None)[source]#

- Parameters:

img – input image to sample patches from. assuming img is a channel-first array.

weight_map – weight map used to generate patch samples. The weights must be non-negative. Each element denotes a sampling weight of the spatial location. 0 indicates no sampling. It should be a single-channel array in shape, for example, (1, spatial_dim_0, spatial_dim_1, …)

randomize – whether to execute random operations, default to True.

lazy – a flag to override the lazy behaviour for this call, if set. Defaults to None.

- Returns:

A list of image patches

- property lazy#

Get whether lazy evaluation is enabled for this transform instance. :returns: True if the transform is operating in a lazy fashion, False if not.

- randomize(weight_map)[source]#

Within this method,

self.Rshould be used, instead of np.random, to introduce random factors.all

self.Rcalls happen here so that we have a better chance to identify errors of sync the random state.This method can generate the random factors based on properties of the input data.

- Raises:

NotImplementedError – When the subclass does not override this method.

- Return type:

None

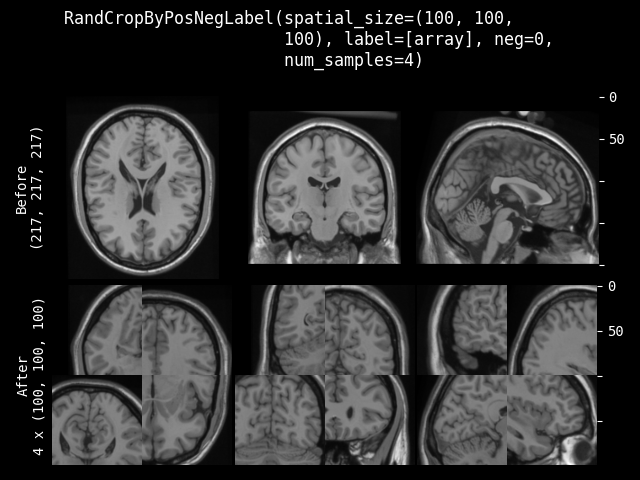

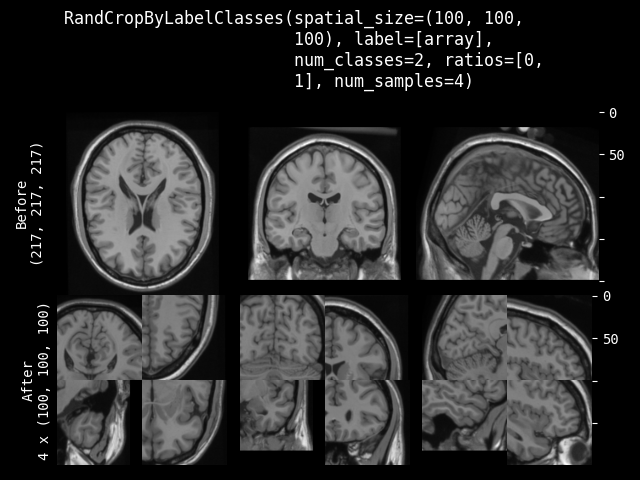

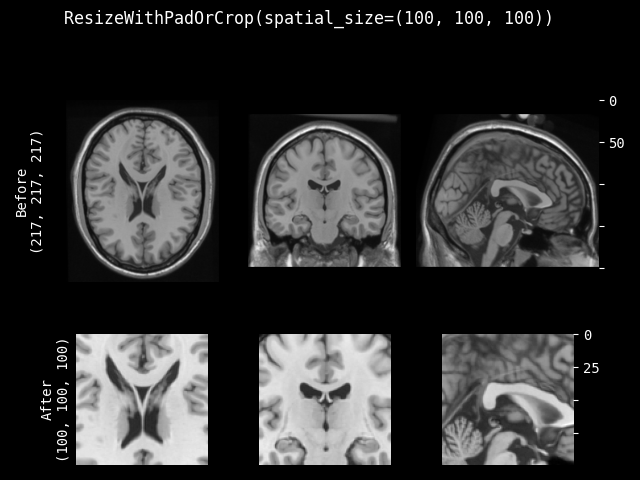

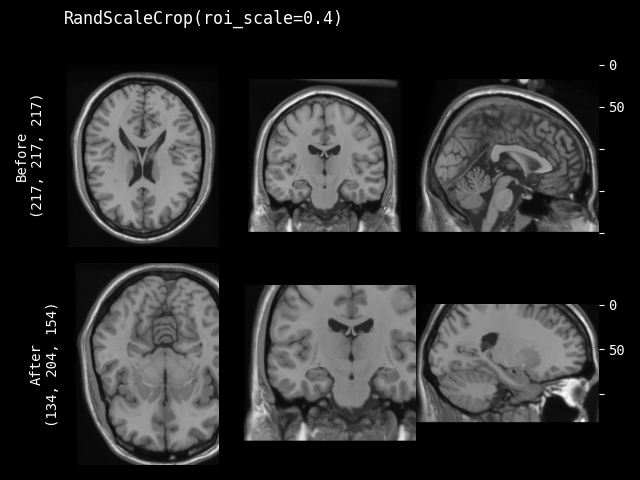

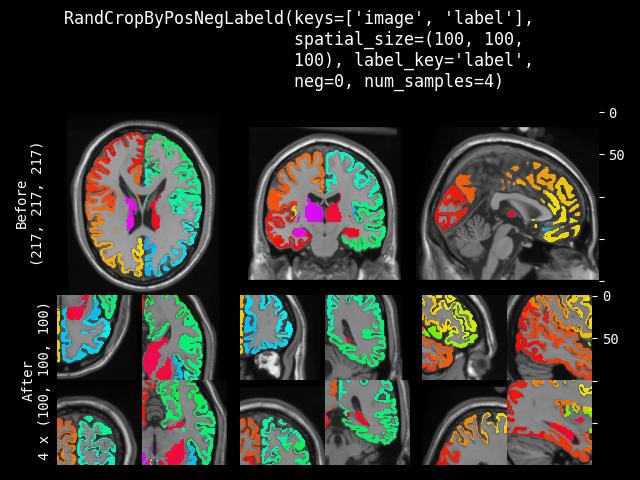

RandCropByPosNegLabel#

- class monai.transforms.RandCropByPosNegLabel(spatial_size, label=None, pos=1.0, neg=1.0, num_samples=1, image=None, image_threshold=0.0, fg_indices=None, bg_indices=None, allow_smaller=False, lazy=False)[source]#

Crop random fixed sized regions with the center being a foreground or background voxel based on the Pos Neg Ratio. And will return a list of arrays for all the cropped images. For example, crop two (3 x 3) arrays from (5 x 5) array with pos/neg=1:

[[[0, 0, 0, 0, 0], [0, 1, 2, 1, 0], [[0, 1, 2], [[2, 1, 0], [0, 1, 3, 0, 0], --> [0, 1, 3], [3, 0, 0], [0, 0, 0, 0, 0], [0, 0, 0]] [0, 0, 0]] [0, 0, 0, 0, 0]]]

If a dimension of the expected spatial size is larger than the input image size, will not crop that dimension. So the cropped result may be smaller than expected size, and the cropped results of several images may not have exactly same shape. And if the crop ROI is partly out of the image, will automatically adjust the crop center to ensure the valid crop ROI.

This transform is capable of lazy execution. See the Lazy Resampling topic for more information.

- Parameters:

spatial_size – the spatial size of the crop region e.g. [224, 224, 128]. if a dimension of ROI size is larger than image size, will not crop that dimension of the image. if its components have non-positive values, the corresponding size of label will be used. for example: if the spatial size of input data is [40, 40, 40] and spatial_size=[32, 64, -1], the spatial size of output data will be [32, 40, 40].

label – the label image that is used for finding foreground/background, if None, must set at self.__call__. Non-zero indicates foreground, zero indicates background.

pos – used with neg together to calculate the ratio

pos / (pos + neg)for the probability to pick a foreground voxel as a center rather than a background voxel.neg – used with pos together to calculate the ratio

pos / (pos + neg)for the probability to pick a foreground voxel as a center rather than a background voxel.num_samples – number of samples (crop regions) to take in each list.

image – optional image data to help select valid area, can be same as img or another image array. if not None, use

label == 0 & image > image_thresholdto select the negative sample (background) center. So the crop center will only come from the valid image areas.image_threshold – if enabled image, use

image > image_thresholdto determine the valid image content areas.fg_indices – if provided pre-computed foreground indices of label, will ignore above image and image_threshold, and randomly select crop centers based on them, need to provide fg_indices and bg_indices together, expect to be 1 dim array of spatial indices after flattening. a typical usage is to call FgBgToIndices transform first and cache the results.

bg_indices – if provided pre-computed background indices of label, will ignore above image and image_threshold, and randomly select crop centers based on them, need to provide fg_indices and bg_indices together, expect to be 1 dim array of spatial indices after flattening. a typical usage is to call FgBgToIndices transform first and cache the results.

allow_smaller – if False, an exception will be raised if the image is smaller than the requested ROI in any dimension. If True, any smaller dimensions will be set to match the cropped size (i.e., no cropping in that dimension).

lazy – a flag to indicate whether this transform should execute lazily or not. Defaults to False.

- Raises:

ValueError – When

posornegare negative.ValueError – When

pos=0andneg=0. Incompatible values.

- __call__(img, label=None, image=None, fg_indices=None, bg_indices=None, randomize=True, lazy=None)[source]#

- Parameters:

img – input data to crop samples from based on the pos/neg ratio of label and image. Assumes img is a channel-first array.

label – the label image that is used for finding foreground/background, if None, use self.label.

image – optional image data to help select valid area, can be same as img or another image array. use

label == 0 & image > image_thresholdto select the negative sample(background) center. so the crop center will only exist on valid image area. if None, use self.image.fg_indices – foreground indices to randomly select crop centers, need to provide fg_indices and bg_indices together.

bg_indices – background indices to randomly select crop centers, need to provide fg_indices and bg_indices together.

randomize – whether to execute the random operations, default to True.

lazy – a flag to override the lazy behaviour for this call, if set. Defaults to None.

- property lazy#

Get whether lazy evaluation is enabled for this transform instance. :returns: True if the transform is operating in a lazy fashion, False if not.

- randomize(label=None, fg_indices=None, bg_indices=None, image=None)[source]#

Within this method,

self.Rshould be used, instead of np.random, to introduce random factors.all